Why SVG Generation Needs a New Scorecard

Haonan Zhu, Adrienne Deganutti, Purvanshi Mehta

TL;DR

SVG is widely used on the web, yet producing production-ready vector assets remains costly and difficult to automate. SVG generation spans text, image, web, and multimodal workflows that use either classical tracing algorithms which preserve topology but degrade on complex imagery, or machine learning methods that may be structurally sound but visually inaccurate. Existing evaluation methods are often misaligned with practical usability. Better metrics are needed to capture production requirements such as structural and semantic alignment, design-relevant visual quality, and editability.

What is an SVG file?

Graphic design is a highly iterative process: you sketch, refine, and revise in cycles until the image matches your vision. SVG (Scalable Vector Graphics) is a file format where a drawing is stored as editable instructions such as "draw this curve," "fill this shape," "move or scale this group" instead of pixels.

The animation below is a helpful mental model for a graphic designer's step-by-step SVG production process; SVG can be seen (loosely) as a "mathematical recipe" for the entire drawing process.

Figure 1: Each SVG command adds a small piece, and together they reconstruct the full design like a precise blueprint for the drawing. "Paint Class GIF" — Carlotta Notaro (Behance, published Aug 11, 2019). [16]

SVG code describes shapes, strokes, fills and transforms using a structured syntax. The rendering process (i.e., converting SVG to a viewable image asset such as PNG) can be understood as a drawing pipeline that interprets each element step-by-step to gradually compose the final image.

Figure 2: SVG code generated by GPT-5.2 shown as a step-by-step rendering process.

Why are SVGs Important?

SVG is the most common image format used on the web (about 65% of websites [2]). A major reason is that SVG represents graphics as editable drawing instructions rather than pixels [3]. In practice, this brings several advantages:

- High-resolution at any size: SVGs scale cleanly without becoming blurry, which is ideal for high-resolution and responsive screens. The same SVG file can be used on a mobile app or a building-sized billboard.

Figure 3: Raster images (JPG/PNG) are pixel-based and blur when scaled, whereas vector images (SVG) use mathematical paths and stay crisp at any size. [17]

- Lightweight and easy to maintain: SVG file sizes are often small because the image is stored as compact shape instructions rather than pixel data. And because SVG is text-based, files are easy to edit and reuse across projects [3,4].

Figure 4: Comparison of Pinterest logo file sizes for a high-quality render across JPG, PNG, and SVG. [18]

- Searchable and accessible: elements and labels can be indexed and searched, and text can remain real text (supporting accessibility tools such as screen readers) [3,5]. As a result, icons, diagrams, or texts in SVG files are discoverable via site search, docs search, and in-browser 'Find'. This also makes them easier to maintain and edit, since individual elements can be reliably targeted (e.g., by IDs/classes).

- Web-native and interactive: SVGs integrate well with the web platform. You can style them, script them, and animate them, which makes them widely used for logos, icons, charts, infographics, and illustrations, where clarity and interactivity matter [3,4,5].

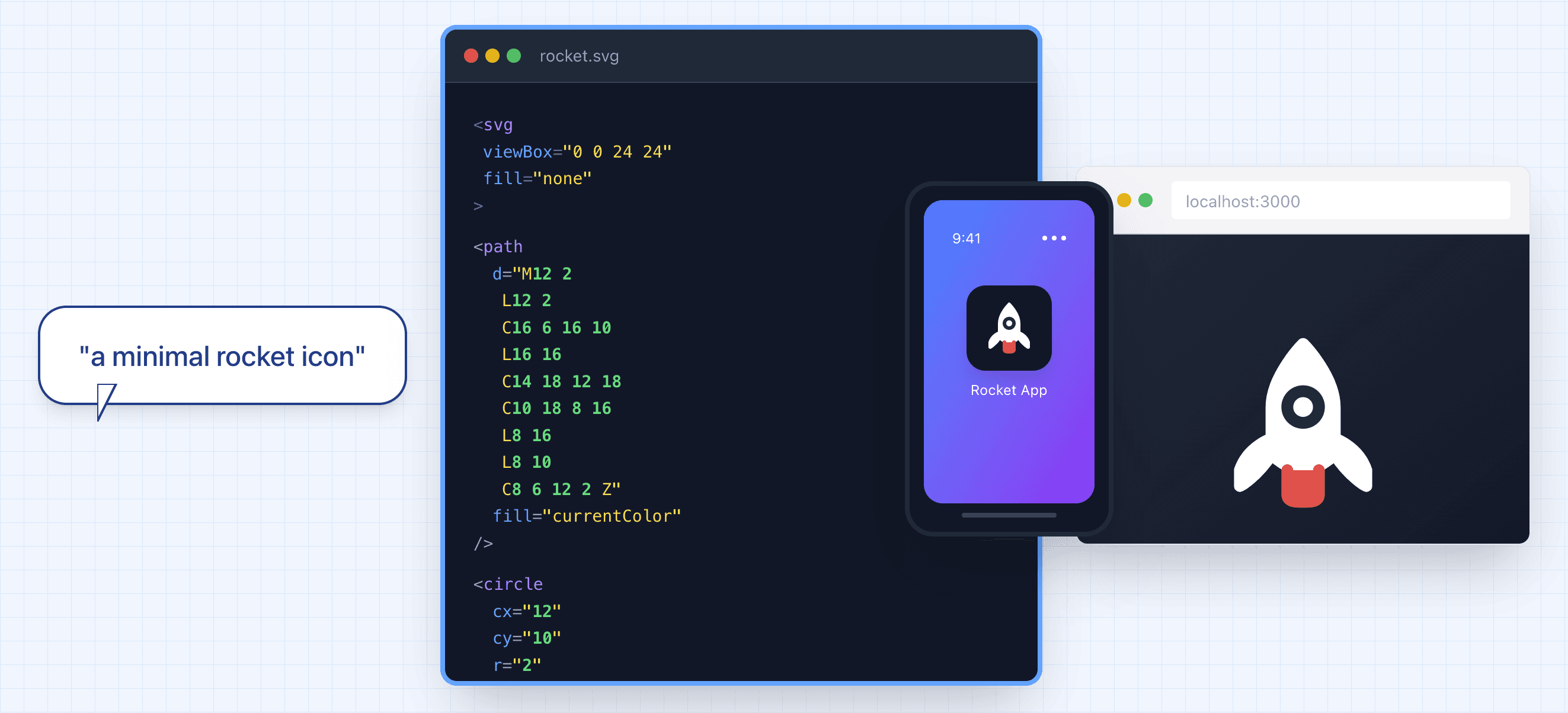

Figure 5: SVG icons are lightweight and stylable, making them ideal for web interfaces.

SVG's advantages are appealing but producing high-quality vector assets remains costly in production.

"Vector-ready" files often require both artistic judgment and practical constraints (clean shapes, consistent structure, and reliable performance). In many teams, that cost shows up as specialized roles and frequent raster-to-vector conversions, and handoff overhead where a single detailed illustration could cost more than $1500 (estimated from [15]).

SVG Generation: State of the Art

Figure 6: SVG generation workflows

In practice, SVG generation can be broadly categorized into four workflows—depending on whether you're creating new assets, converting existing visuals, extracting vectors from existing digital content or combining these approaches:

| Task | Use Case |

|---|---|

| Text-to-SVG (created from intent) | Used when you want new vector assets from a description (icons, illustrations, diagrams) in an in-house style. |

| Image-to-SVG (convert existing visuals) | Used when you start from existing visuals in pixels (e.g., PNGs, JPEGs) and need them in vector format (logos, flat art, screenshots, scanned drawings). There are two subtasks: Image vectorization (trace): recover shapes and curves from pixels. Reference-guided generation (reinterpret): keep the composition or style cues but output a simplified or standardized vector asset. |

| Web-to-SVG (extract/translate from digital structure) | Used when the source is already digital and structured (web pages, UI, charts, diagrams). In practice, this usually falls into: Direct extraction: the page already contains SVG assets. Structure translation: DOM/CSS/layout → SVG. Raster fallback: canvas/WebGL → screenshot → vectorization. |

| Multimodal SVG Generation (combine inputs) | Used when multiple sources of input (e.g., text description and image) are available. |

Table 1: Different SVG generation workflows

Text-to-SVG and image-to-SVG are the core building blocks that multimodal and web-based pipelines often rely on. Hence, this post focuses these two tasks, which share the same goal: turning an input into an editable vector asset.

There are two families of approaches to address the SVG generation problem:

Figure 7: Overview of SVG generation methods

- Image Processing-Based

Classical image vectorization methods typically uses edge detection methods. For instance, Potrace algorithm traces the boundaries between black and white pixels. These methods have the advantage of effectively preserving topology because they do not process the image semantically, but rather enforce spatial logic. There is no notion of "what object this is," only "where the boundary lies." However, the performance of these methods decreases as the complexity of the image increases.

- Machine Learning Based, which consists of:

SVG generation guided by image generation models (e.g., diffusion models) has two main steps: 1) generate the initial rasterized image using existing image generation models such as Google's Nano Banana, 2) convert the generated image into SVG code using either classical vectorization algorithms mentioned above or optimization-based methods. While offering high-quality images after rendering, these methods also suffer from a lack of editability in their producsed SVG code.

Model-based code generation, where a trained (vision) language model like OpenAI's ChatGPT outputs SVG code directly token-by-token given a user prompt or image. While offering greater editability, these methods are limited to simple SVG files such as icons, and the quality of the generated image degrades noticeably when testing on samples distinct from the training data.

Evaluation Metrics

With all these approaches for SVG generation, how do we quantitatively decide which SVG is more usable than the others?

The existing metrics in literature can be organized into the following categories:

| Metric Category | Description | Examples |

|---|---|---|

| Alignment Scores | Measures how "aligned" the generated SVG is to the input (text or image) | CLIP Score, DinoScore [19] |

| Pixel-based Metrics | Measures how "faithful" the generated SVG (after rendering) is to the reference image | Mean Squared Error (MSE), Structural Similarity Index (SSIM), Learned Perceptual Image Patch Similarity (LPIPS) |

| Distribution level metrics | Measures dataset-level similarity between the generated SVGs and the original images | Fréchet inception distance (FID), FID-CLIP [20] |

| Human preference scores | Models trained on human preference data to score images | HPSv2, PickScore, ImageReward [21] |

| Code Level | Assesses how "complex" the generated SVG code is | Code length, Number of paths, Number of commands [14] |

Table 2: overview of evaluation metrics for SVG generation

Demonstrations

Following is a demonstration of applying these metrics to various SVG generation tasks for five examples. Explore the interactive comparison below to see how different methods perform across Text→SVG, Image→SVG, and Text+Image→SVG tasks.

Figure 8: interactive demo to compare SVG generation methods across different tasks. Use the tabs to switch between task types, and click on examples to see detailed metric comparisons. Use arrow keys (← →) to navigate images and number keys (1-4) to switch tasks.

Rasterized SVG metrics (guitar example): CLIP text-to-image can reward "overall vibe" even when the object is wrong (morphed guitar), suggesting weak keyword or object prioritization. CLIP image-to-image struggles to score shape and color trade-offs (OmniSVG gets the silhouette but misses color). Pixel metrics (e.g., MSE) overemphasize spatial alignment: OmniSVG scores well despite wrong colors, while Claude scores worse despite correct colors but mismatched geometry. Human-preference models can favor simplicity over useful detail (e.g., penalizing added strings).

Code-level metrics: VTracer often yields the highest fidelity image-to-SVG outputs, but its SVG is ~10× longer and dominated by long cubic Bézier paths, making edits painful compared to more structured, commented ML-generated SVG, which highlights a trade-off between fidelity and readability.

<?xml version="1.0" encoding="UTF-8"?> <!-- Generator: visioncortex VTracer --> <svg id="svg" version="1.1" xmlns="http://www.w3.org/2000/svg" style="display: block;" viewBox="0 0 512 512"><path d="M0 0 C168.96 0 337.92 0 512 0 C512 168.96 512 337.92 512 512 C343.04 512 174.08 512 0 512 C0 343.04 0 174.08 0 0 Z " transform="translate(0,0)" style="fill: #FEFEFE;"/><path d="M0 0 C2.31 0 4.62 0 7 0 C7.33 9.24 7.66 18.48 8 28 C8.495 27.505 8.495 27.505 9 27 C10.69558726 26.90067847 12.39525747 26.86919993 14.09375 26.8671875 C15.12371094 26.86589844 16.15367187 26.86460937 17.21484375 26.86328125...

In addition to the visual demonstration, we benchmarked the following seven methods: VTracer (a classical image vectorization baseline), OmniSVG, StarVector (Image-to-SVG), NeuralSVG (Text-to-SVG), Gemini, GPT-4o, and Claude across three complexity tiers (Complex, Medium, Simple) for both Image-to-SVG and Text-to-SVG tasks. The results are organized into four metric groups below: code-level scores, pixel & distribution-level scores, alignment scores, and human-preference model scores. Bold values indicate the best result per metric within each task group. Note that CLIP text-to-image is omitted for Image-to-SVG because no text prompt is used in that task.

| Model | Code Length | Weighted Complexity | Rendering Rate (%) ↑ | ||||||

|---|---|---|---|---|---|---|---|---|---|

| Complex | Medium | Simple | Complex | Medium | Simple | Complex | Medium | Simple | |

| Image-to-SVG | |||||||||

| VTracer | 36902 | 17636 | 5166 | 2715 | 1245 | 342 | 100 | 100 | 100 |

| OmniSVG | 11205 | 9271 | 3284 | 887 | 771 | 264 | 87.7 | 92.2 | 97.9 |

| StarVector | 2281 | 1884 | 763 | 89 | 116 | 35 | 4.6 | 24.2 | 83 |

| Gemini | 2853 | 1378 | 438 | 145 | 66 | 15 | 100 | 100 | 99 |

| GPT-4o | 3486 | 1794 | 561 | 136 | 73 | 18 | 100 | 99 | 100 |

| Claude | 2035 | 1335 | 453 | 43 | 30 | 12 | 100 | 100 | 100 |

| Text-to-SVG | |||||||||

| OmniSVG | 10223 | 8931 | 4142 | 803 | 716 | 334 | 100 | 100 | 100 |

| NeuralSVG | 9131 | 9128 | 9130 | 208 | 208 | 208 | 100 | 100 | 100 |

| Gemini | 1785 | 1283 | 609 | 42 | 31 | 10 | 100 | 99 | 97 |

| GPT-4o | 4539 | 2584 | 1074 | 127 | 62 | 18 | 100 | 100 | 100 |

| Claude | 2953 | 1881 | 776 | 59 | 46 | 14 | 100 | 100 | 100 |

| Model | MSE ↓ | FID-CLIP ↓ | ||||

|---|---|---|---|---|---|---|

| Complex | Medium | Simple | Complex | Medium | Simple | |

| Image-to-SVG | ||||||

| VTracer | 0.0020 | 0.0014 | 0.0040 | 0.0097 | 0.0064 | 0.0151 |

| OmniSVG | 0.6777 | 0.7590 | 0.6068 | 0.3193 | 0.2213 | 0.1409 |

| StarVector | 0.6676 | 0.6905 | 0.5898 | 0.1977 | 0.1501 | 0.0973 |

| Gemini | 0.1004 | 0.0668 | 0.0579 | 0.0943 | 0.0635 | 0.0311 |

| GPT-4o | 0.0740 | 0.0569 | 0.0449 | 0.1118 | 0.0723 | 0.0292 |

| Claude | 0.1810 | 0.0815 | 0.1214 | 0.1347 | 0.0923 | 0.0513 |

| Text-to-SVG | ||||||

| OmniSVG | 0.1370 | 0.1635 | 0.1766 | 0.2506 | 0.1763 | 0.1064 |

| NeuralSVG | 0.2290 | 0.2407 | 0.2171 | 0.2203 | 0.2094 | 0.2023 |

| Gemini | 0.1751 | 0.1239 | 0.1464 | 0.1540 | 0.1196 | 0.0720 |

| GPT-4o | 0.0907 | 0.0935 | 0.1186 | 0.1553 | 0.1210 | 0.0736 |

| Claude | 0.1995 | 0.1383 | 0.1686 | 0.1553 | 0.1242 | 0.0784 |

| Model | CLIP Score (text-to-image) ↑ | CLIP Score (image-to-image) ↑ | ||||

|---|---|---|---|---|---|---|

| Complex | Medium | Simple | Complex | Medium | Simple | |

| Image-to-SVG | ||||||

| VTracer | N/A | N/A | N/A | 0.9932 | 0.9957 | 0.9867 |

| OmniSVG | N/A | N/A | N/A | 0.7424 | 0.8136 | 0.8693 |

| StarVector | N/A | N/A | N/A | 0.8922 | 0.9000 | 0.9226 |

| Gemini | N/A | N/A | N/A | 0.9290 | 0.9512 | 0.9742 |

| GPT-4o | N/A | N/A | N/A | 0.9157 | 0.9463 | 0.9768 |

| Claude | N/A | N/A | N/A | 0.8979 | 0.9281 | 0.9574 |

| Text-to-SVG | ||||||

| OmniSVG | 14.91 | 17.61 | 21.71 | 0.7450 | 0.7943 | 0.8569 |

| NeuralSVG | 15.32 | 18.86 | 22.07 | 0.8022 | 0.8143 | 0.8306 |

| Gemini | 18.92 | 22.71 | 26.86 | 0.8783 | 0.9022 | 0.9321 |

| GPT-4o | 18.57 | 22.37 | 26.04 | 0.8759 | 0.9002 | 0.9294 |

| Claude | 19.09 | 22.53 | 26.62 | 0.8776 | 0.8978 | 0.9253 |

| Model | HPSv2 ↑ | PickScore ↑ | ||||

|---|---|---|---|---|---|---|

| Complex | Medium | Simple | Complex | Medium | Simple | |

| Image-to-SVG | ||||||

| VTracer | 0.3898 | 0.3890 | 0.3591 | 22.02 | 22.13 | 22.03 |

| OmniSVG | 0.2059 | 0.2531 | 0.2815 | 18.41 | 19.37 | 20.33 |

| StarVector | 0.3318 | 0.3456 | 0.3366 | 20.68 | 20.61 | 20.95 |

| Gemini | 0.3757 | 0.3774 | 0.3677 | 21.18 | 21.59 | 21.89 |

| GPT-4o | 0.3631 | 0.3732 | 0.3608 | 20.95 | 21.59 | 21.91 |

| Claude | 0.3578 | 0.3629 | 0.3574 | 21.07 | 21.49 | 21.76 |

| Text-to-SVG | ||||||

| OmniSVG | 0.1852 | 0.2266 | 0.2665 | 18.48 | 19.25 | 20.38 |

| NeuralSVG | 0.2610 | 0.2635 | 0.2697 | 19.02 | 19.41 | 19.80 |

| Gemini | 0.3756 | 0.3761 | 0.3698 | 21.39 | 21.86 | 22.04 |

| GPT-4o | 0.3707 | 0.3669 | 0.3645 | 21.20 | 21.68 | 21.90 |

| Claude | 0.3768 | 0.3786 | 0.3708 | 21.51 | 21.83 | 21.97 |

Table 3: Baseline comparison across complexity levels. Methods are grouped by task type: Image-to-SVG (given a reference image) and Text-to-SVG (given only a text prompt). Bold indicates the best score per column within each task group.

Key takeaway: VTracer, a classical image vectorization method, sweeps most of the fidelity metrics for Image-to-SVG task at the cost of much longer and complex SVG code, making it impractical to edit. Among ML methods, proprietary models (Gemini, GPT-4o, Claude) consistently outperform dedicated open-source models (OmniSVG, StarVector/NeuralSVG) on alignment, preference, and pixel-fidelity metrics while producing compact, reliably renderable SVG.

In summary, while existing metrics are correlated with "quality," they are approximations of what we are aiming for and misleading at times. In addition, we observe a noticeable gap in performance between open-source models and proprietary models, which suggests substantial room for further research in the open-source community.

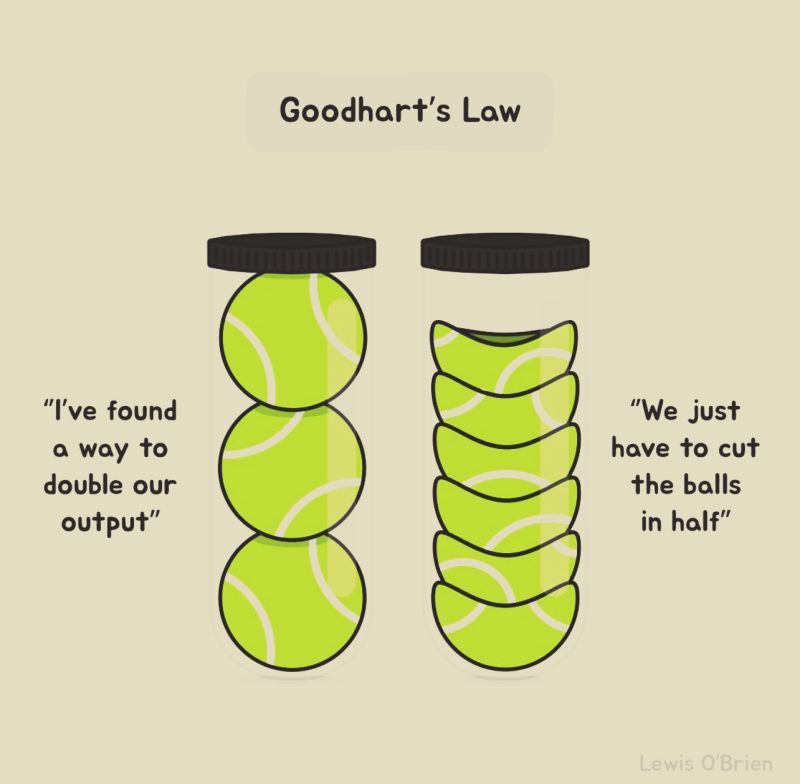

Figure 9: Misaligned objective

What is a good objective?

Alignment

Does the output match the intent (prompt or source image), not just visually, but structurally? Should a "logo" request produce a single cohesive group, while a "diagram" request produce labeled, semantically-organized layers? How do we balance shapes and colors?

Visual Quality

Beyond pixel-level metrics with respect to a reference image, how do we measure aesthetic properties like balance, proportion, and style consistency that matter in real design contexts?

Editability

Metrics such as code length offer an initial complexity assessment, but the question remains: how to quantify the "designer-readiness" of an SVG? Measuring layer organization, naming conventions, and adherence to design system constraints determines whether a vector asset can actually be maintained in production.

Figure 10: Three complementary dimensions of SVG generation for production motivated by our discussion above.

Conclusion

As this post shows, generating production-ready SVG assets is still an open problem. Figure 10 in particular highlights the need for improved evaluation metrics along the three complementary dimensions.

Until we develop metrics that capture these practical dimensions, SVG generation will continue to struggle without a proper goalpost.

Stay tuned for our upcoming technical report, where we’ll introduce our in-house method for machine learning based SVG generation and the improvements it enables.

Footnote: For interested readers, the Awesome-SVG-Generation [12] and Awesome-Sketch-Synthesis [13] lists are good starting hubs.

References:

- https://en.wikipedia.org/wiki/SVG

- https://w3techs.com/technologies/details/im-svg

- https://www.adobe.com/creativecloud/file-types/image/vector/svg-file.html

- https://www.w3.org/TR/SVG2/

- https://developer.mozilla.org/en-US/docs/Web/SVG

- https://www.bls.gov/ooh/arts-and-design/graphic-designers.htm

- https://www.bls.gov/ooh/arts-and-design/multimedia-artists-and-animators.htm

- https://helpx.adobe.com/in/illustrator/using/image-trace.html

- https://github.com/svg/svgo

- https://lottiefiles.com/supported-features

- https://github.com/airbnb/lottie/blob/master/supported-features.md

- https://github.com/Melmaphother/Awesome-SVG-Generation?tab=readme-ov-file

- https://github.com/MarkMoHR/Awesome-Sketch-Synthesis?tab=readme-ov-file

- https://github.com/ZJU-REAL/SVGenius/tree/main

- https://www.upwork.com/hire/illustrators/cost/

- https://www.behance.net/gallery/84052555/Paint-Class-GIF

- https://vectorwiz.com/raster-vs-vector-images/

- https://worldvectorlogo.com/logo/pinterest-2-1

- https://lightning.ai/docs/torchmetrics/stable/multimodal/clip_score.html

- https://lightning.ai/docs/torchmetrics/stable/image/frechet_inception_distance.html

- https://github.com/RE-N-Y/imscore